How to Deliver Data Quality with Data Governance

By Myles Suer

Published on October 4, 2024

At Dataversity’s Data Quality and Information Quality Conference, Ryan Doupe, the Chief Data Officer of American Fidelity, held a thought-provoking session that resonated with me. In his “9-Step Process for Better Data Quality,” Ryan discussed the processes for generating data that business leaders consider trustworthy.

To be clear, data quality is one of several types of data governance as defined by Gartner and the Data Governance Institute:

Quality policies for data and analytics set expectations about the “fitness for purpose” of artifacts across various dimensions.

– Gartner, “Data and Analytics Governance Requires a Comprehensive Range of Policy Types”

Most in this space are familiar with data governance rules that enforce compliance. But what about governance rules that enforce quality?

After listening to Ryan, I would contend that in contrast to purely defensive forms of data governance, data quality rules are not focused on role-based access.

Instead, data quality rules promote awareness and trust. They alert all users to data issues and guide data stewards to remediate data from a data source.

Before we discuss Ryan’s perspective on data quality, let’s consider the impact data quality management can have on an organization, some related challenges, and things to consider as you work to improve data quality.

Understanding the ROI of data quality management

Investing in data quality management delivers measurable returns through reduced operational costs, improved decision-making, and enhanced customer satisfaction.

Organizations that implement robust data quality programs should expect reductions in data-related errors and poor decision-making, resulting in significant cost savings through decreased rework, improved productivity, and better business outcomes. The ROI of data quality management extends beyond direct cost savings to include improved regulatory compliance, enhanced customer trust, and more accurate analytics capabilities that drive strategic business decisions.

Data quality compliance and regulatory requirements

Data quality plays a crucial role in meeting regulatory compliance requirements across industries, from GDPR and CCPA to industry-specific regulations. Organizations must implement robust data quality frameworks that ensure accurate, complete, and timely data for regulatory reporting while maintaining proper documentation of data lineage and quality controls. This approach not only satisfies regulatory requirements but also provides a foundation for better business decision-making and risk management.

Why data quality is essential for business intelligence and analytics

Data quality is critical to business intelligence and analytics, as accurate data underpins every insight and decision derived from these processes. Poor data quality leads to unreliable analytics, which can impact strategic decisions and reduce trust in BI systems.

Effective data governance frameworks help maintain high data quality standards, ensuring that analytics teams can rely on accurate, timely, and relevant data. When data quality is prioritized, organizations can leverage their BI and analytics tools to make more informed, data-driven decisions.

How data quality and governance support data-driven decision-making

Data quality and governance are foundational to data-driven decision-making, as they ensure the accuracy, consistency, and reliability of the data used to guide strategic choices.

A robust governance framework enforces quality standards, providing decision-makers with high-quality data they can trust. When organizations prioritize data governance and quality, they empower teams across departments to make well-informed decisions based on timely and relevant insights, leading to better business outcomes and a competitive advantage in their industry.

Key metrics for measuring data quality success

Effective data quality measurement requires a comprehensive set of metrics that track accuracy, completeness, consistency, timeliness, and validity of data across the enterprise. Organizations should establish baseline measurements for data quality dimensions, track improvement over time, and tie these metrics to specific business outcomes such as reduced error rates in reporting, decreased time spent on data cleansing, and improved customer satisfaction scores. Regular monitoring of these metrics enables organizations to identify areas for improvement and demonstrate the value of data quality initiatives to stakeholders.

Data quality challenges in the modern enterprise

Today's enterprises face unprecedented data quality challenges due to the increasing volume, variety, and velocity of data flowing through their systems. The proliferation of data sources, legacy systems integration issues, and the need for real-time data processing create complex data quality management scenarios that require sophisticated solutions.

Organizations must address these data quality management challenges while maintaining compliance with evolving regulatory requirements and meeting growing business demands for accurate, reliable data.

Data governance framework: Building blocks for enterprise data quality using Doupe’s 9-step framework

Ryan recommends a nine-step framework for managing data quality with data governance. He also points out where data tools can be applied to help with these process steps.

Step 1: Identifying critical data elements (CDEs): Essential first steps in data governance

This requires that you determine the scope of your data quality program. In other words, you must determine the items that should be under control of a data governance program focused upon data quality. This starts by determining the critical data elements for the enterprise. Typically, these are known to be vital to the success of the organization. These items become in-scope for the data quality program.

Step 2: Creating standardized data definitions: building your business glossary

With this step, you create a glossary for CDEs. Here each critical data element is described so there are no inconsistencies between users or data stakeholders.

Step 3: Assessing business impact: How data quality affects enterprise operations

What’s the business impact of critical data elements being trustworthy… or not? In this step, you connect data integrity to business results in shared definitions. This work enables business stewards to prioritize data remediation efforts.

Step 4: Mapping data sources to understand your enterprise data landscape

This step is about cataloging data sources and discovering data sources containing the specified critical data elements. A comprehensive mapping of data sources creates a clear picture of how data flows through your organization, identifying every system, application, and database that creates, processes, or stores critical data elements.

Step 5: Data profiling techniques for analyzing data quality patterns and issues

With data cataloged, data sources that contain CDEs are then profiled. This is done by collecting data statistics and answering key questions. For example, how many records and rows exist? Minimum and maximum values for data elements? Frequency of data? Data patterns?

Step 6: Establishing data quality rules: Guidelines for data validation and control

With profiling complete, you can use a data quality tool to create rules supporting data quality. AI and machine learning can automatically create and enforce such rules, or people can use the data extracted from data profiling to create the rules manually.

Step 7: Measuring data quality with key metrics and KPIs for success

With data quality rules completed and firing, you can collect data quality metrics. These metrics inform users of suspect data and alert data stewards to data needing remediation. In Alation, these metrics are added directly into the data catalog, so users who discover data know about any issues in real-time.

Step 8: Identifying authoritative data sources for single-source-of-truth implementation

Determining authoritative data sources is a key output of a data quality program. For this step, quality metrics gauge data sources to determine if the data is of sufficient quality. This allows users to quickly find the most trustworthy data for analysis. They can then collaborate around that data via shared conversations and queries hosted in the data catalog.

Step 9: Data quality remediation strategy, implementation, and action plans

Finally, for data with systemic issues, it is important to address the root cause of data quality issues and determine how to solve the source data issues. This can involve data cleansing or training of data entry personnel.

Maximizing data quality through data catalog governance: A step-by-step guide

Alation’s system for data governance syncs up nicely with Ryan’s nine-step framework for improving data quality with data governance:

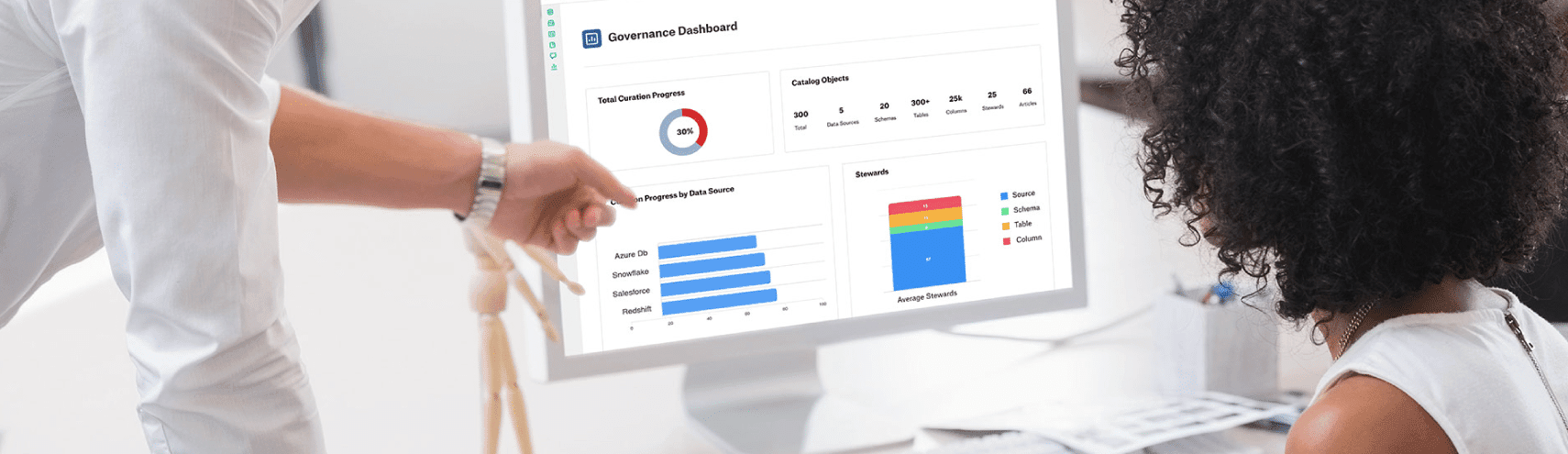

Graph of how to sync data quality via governance into the data catalog.

Let’s take a look at how Ryan’s system may be leveraged to bring data quality-focused governance into the data catalog:

Establish a governance framework by determining CDEs, data definitions, and business impacts (steps 1-3, above).

Populate data catalog, empower data stewards, and curate assets by working together to catalog data sources while connecting data assets to business goals; this addresses the need Ryan highlights to profile data. With this step, data is profiled from the selected data sources and this information is used to create data quality rules. (steps 4, 5 and 6).

Apply policies and controls to establish data quality metrics to guide user behavior (step 7).

Drive community collaboration to use trusted data and integrate human wisdom. Your community can now find and use the best data with help from quality scores. They can also add their own tribal knowledge into the data catalog, further establishing the most authoritative data sources. (step 8).

Monitor and measure with data quality remediation plans. These are useful in finding repeatable data issues, which will influence how you adapt your data governance framework. It also informs how you clean data and reeducate personnel at the data source within the data catalog.

Purpose-built data governance solutions like a data catalog can also help support data quality management and solve common challenges through automation, data lineage, metadata management, and more.

Automating data quality checks through data governance

Automation is key to maintaining data quality in large, complex datasets, as it reduces human error and ensures consistency. Data governance frameworks support automated quality checks, including data validation, deduplication, and anomaly detection, which help identify and rectify data issues before they impact decision-making. Automated processes are scalable and efficient, making them especially valuable for organizations managing vast data assets. Through automation, companies can maintain data quality continuously, reducing manual effort and ensuring long-term data integrity.

The link between data lineage and data quality in governance

Data lineage—tracking data's journey from source to destination—plays a crucial role in maintaining data quality. By mapping how data flows and transforms across systems, data lineage provides many benefits and enables organizations to monitor quality at each stage of the data lifecycle. When data lineage is integrated into governance frameworks, it enhances transparency and helps stakeholders understand data context, making it easier to identify and resolve quality issues. Establishing data lineage as a standard governance practice empowers organizations to ensure consistent, accurate data across processes.

The role of metadata in data quality and governance

Metadata is a cornerstone of effective data governance, directly influencing data quality by providing essential context about data sources, lineage, and transformations. By capturing metadata in a structured way, organizations gain insights into the origin, accuracy, and usage of their data assets. This transparency helps maintain data quality by enabling users to trust the data they are working with. Through metadata management, data catalogs can automate quality monitoring and governance, ensuring that data remains consistent, reliable, and useful for all stakeholders.

Data quality challenges and how data governance solves them

Maintaining data quality is challenging due to factors like data silos, inconsistent standards, and rapid data growth. Data governance frameworks address these issues by establishing unified quality standards, defining ownership, and promoting accountability. By setting data quality policies and implementing validation checks, governance frameworks reduce errors, improve data consistency, and streamline access to high-quality data. This approach ensures that organizations can overcome common data quality obstacles, creating a more reliable data environment for decision-making.

Data quality implementation: Best practices using modern data catalog solutions

Data governance and data quality are massive undertakings that must work as one. An integrated tech stack, with the right blend of tools, is a key asset for addressing these challenges together. A robust ecosystem of tools enables companies to address a wider range of problems to solve.

Partnerships are a key piece of that ecosystem. They enable organizations to be more strategic and relevant to customers. For this reason, Alation has partnered with leading data quality vendors, BigEye, Anomalo, and Soda, as well as other best-in-class vendors as part of Alation’s Open Data Quality Initiative.

These integrations let us provide a whole product. Here, Alation adds a world-class data governance app and data catalog to our partners’ data quality tools to deliver an integrated data observability platform. Together with these data quality and observability vendors, we solve customer problems in data quality with an integrated data governance process. In other words, we provide each of the capabilities described above in Alation’s data governance model, but in this case, we do so for data quality using a data catalog.

Best practices for maintaining data quality in large organizations

Maintaining data quality in large organizations requires consistent standards, cross-departmental collaboration, and automated quality controls. Best practices include establishing data stewardship roles, creating clear data quality metrics, and implementing data validation checks at ingestion points. A comprehensive data governance framework is crucial for standardizing these practices across departments, fostering a culture of quality that enables scalable, organization-wide data integrity. These strategies help prevent data silos, reduce inaccuracies, and create a unified approach to data quality management.

How data stewardship drives data quality in a governance model

Data stewardship is essential to ensuring data quality within a governance framework, as it designates individuals or teams responsible for managing data assets’ integrity. Data stewards oversee data lifecycle processes, from data acquisition to usage, ensuring adherence to quality standards and addressing data issues promptly. By assigning clear stewardship roles, organizations can maintain high-quality data while fostering accountability and transparency. Data stewardship also reinforces data governance initiatives by ensuring that quality practices are consistently applied across the organization.

Final thoughts on achieving data quality through data governance

Ryan Doupe has presented a useful guide for establishing data quality with data governance. In this blog, I’ve demonstrated how you might leverage that framework with a data catalog. Alation’s partnership with top data quality and observability vendors enables us to deliver an end-to-end solution for data quality.

Curious to learn more? Book a demo with us today.

1. https://www.gartner.com/en/documents/3986752/data-and-analytics-governance-requires-a-comprehensive-r

- Understanding the ROI of data quality management

- Data governance framework: Building blocks for enterprise data quality using Doupe’s 9-step framework

- Maximizing data quality through data catalog governance: A step-by-step guide

- Data quality implementation: Best practices using modern data catalog solutions

- Final thoughts on achieving data quality through data governance

Contents

Tagged with

Loading...